What if we tied wealth taxation to customer satisfaction? Not net worth alone - but to whether the wealth was generated through genuine value creation or through extraction, monopolistic behavior, and regulatory capture.

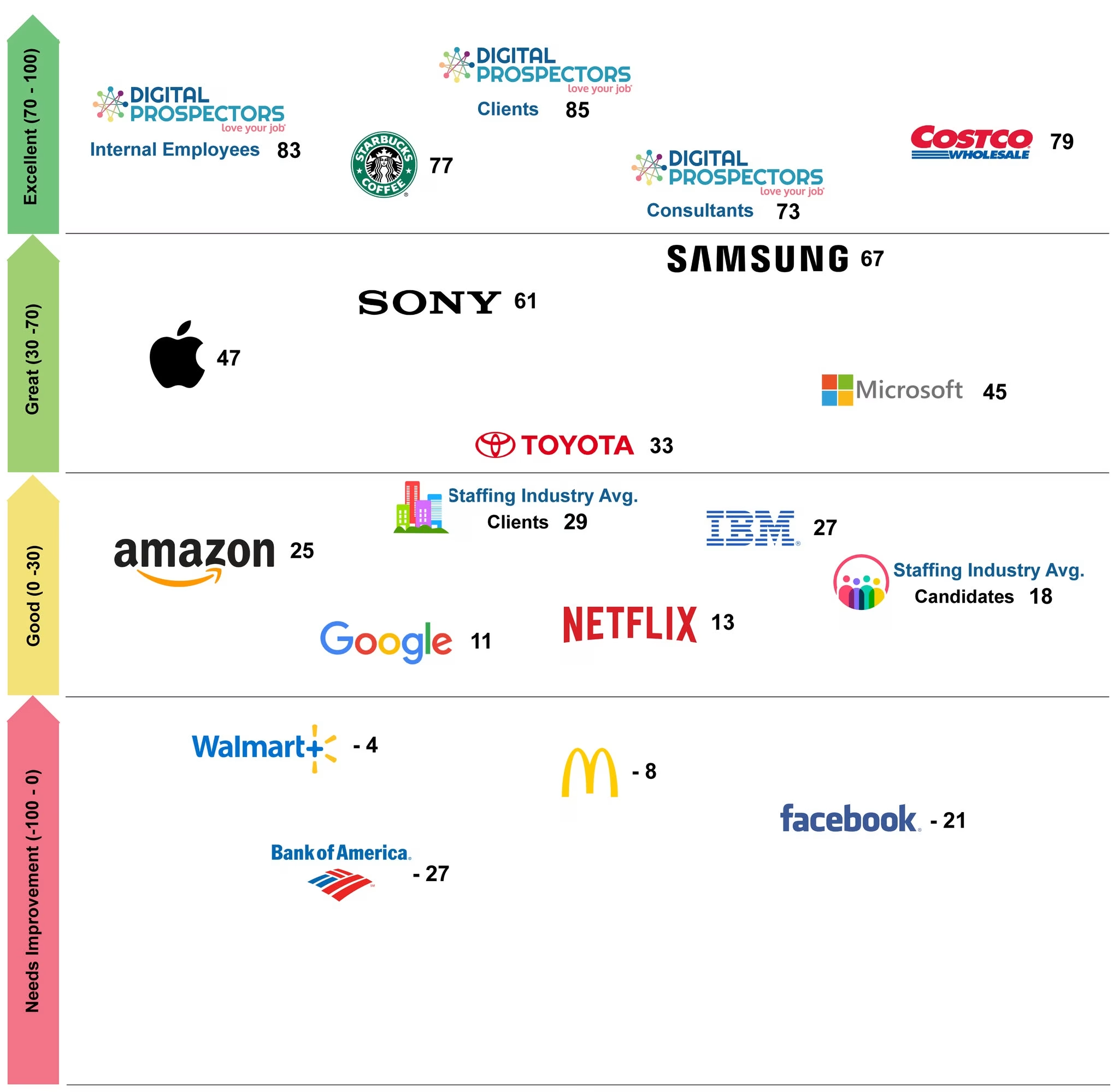

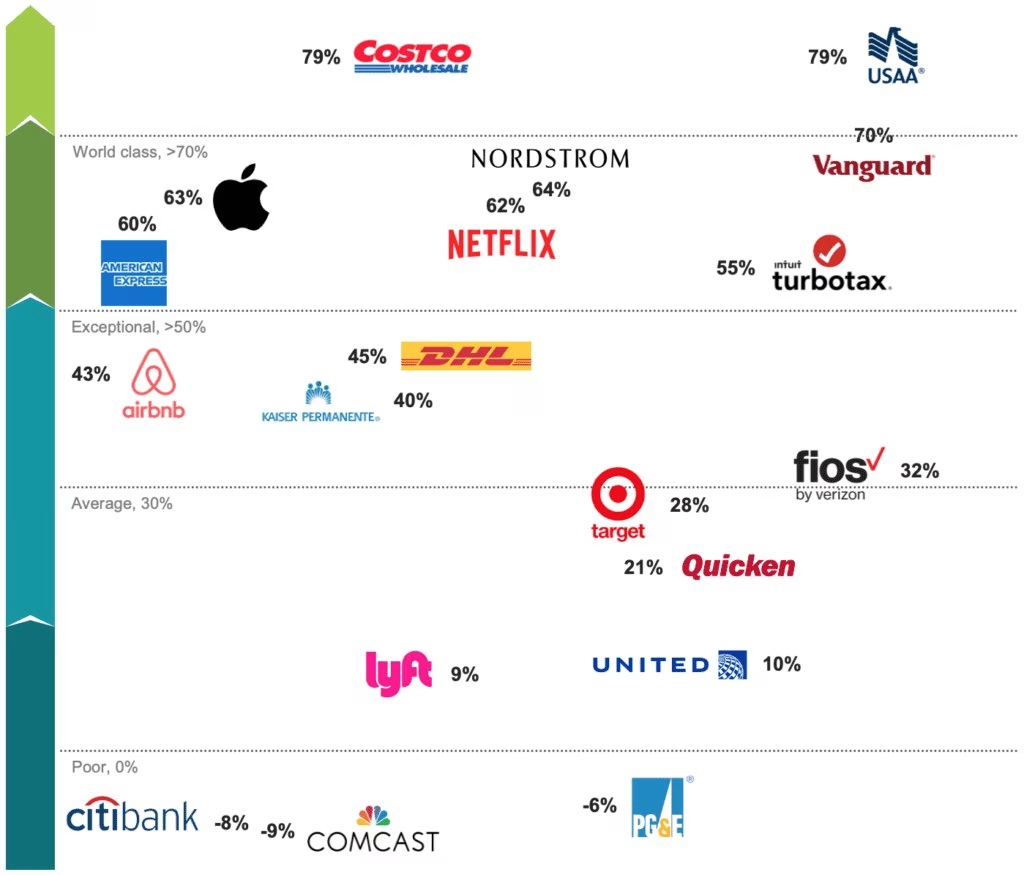

The basic idea: if your NPS score (or some equivalent customer satisfaction metric) is high, you get a tax break. If you've built a company that people love, you've probably created real value. If your NPS is low - if you're running a company people use because they have no choice - you pay more.

Why This Is Interesting

The proposal would distinguish between two kinds of billionaire wealth:

- Wealth created through genuine innovation - where the billionaire got rich because they made something people actually wanted

- Wealth extracted through monopolistic practices - where the billionaire got rich because they eliminated alternatives, lobbied against competition, or trapped customers

There's a real philosophical argument that these two things deserve different treatment. We probably want to reward the first and discourage the second.

Why This Probably Doesn't Work

Let me dismantle my own idea.

NPS can be gamed. Customer satisfaction metrics are notoriously manipulable. You can improve your NPS by carefully selecting who you survey, by offering incentives for positive responses, or by simply making the survey hard to find for unhappy customers. Any measure that carries tax implications will be optimized for the metric rather than the underlying reality.

Industries have inherent satisfaction disparities. Airlines will always have lower NPS than consumer software companies - not because airlines are more exploitative, but because air travel is stressful and delays are common. Defining "legitimate" satisfaction across wildly different industries is essentially impossible.

Government power problem. Giving any government the ability to define "legitimate" vs. "illegitimate" wealth creation is power easily weaponized. Political opponents could have their industries classified as extractive. Regulatory agencies could be captured and turned against disfavored companies. The cure might be worse than the disease.

The definition problem. What counts as "genuine innovation"? Microsoft's market dominance in the 1990s involved a lot of both genuine value creation and arguably anticompetitive behavior simultaneously. These things aren't separable.

So What's the Point?

The proposal isn't right. But I think asking the question matters.

The current debate about taxing billionaires focuses almost entirely on how much to tax, and almost never on what kind of wealth to tax differently. There's a real intuition worth exploring: that wealth generated by eliminating competition and trapping customers is categorically different from wealth generated by making things people want.

Any real implementation would need independent measurement, clear thresholds, anti-gaming provisions, and industry adjustments. It would be enormously complex and politically contentious.

But "we can't implement this cleanly" isn't the same as "the underlying distinction doesn't matter." I'm still thinking about it.

]]>